AI Guidelines for Schools: BlueSky’s Approach to Ethical AI Use

Artificial intelligence has transformed education faster than any technology we’ve seen before. While schools around the US grapple with questions about student AI use, one question often gets overlooked: How should educators themselves use AI?

In this blog, we’ll share how we’ve approached this question at BlueSky—how our staff use AI tools, our “bill of rights” approach to guidelines, what parents think, and more.

AI Ethics Training

The turning point came during an internal AI ethics training at BlueSky. Our staff members shared how they were using AI in their daily work, and the list was quite long: over 100 different tools and applications. Our teachers were using AI for everything from creating reading materials and generating rubrics to translating parent communications and analyzing student data.

As we looked at our staff’s different uses, we also identified a few areas where we wanted to be especially thoughtful. For example, one teacher had started using AI to help grade student writing assignments. While we know AI can be useful for quick feedback activities, we believe that some assignments—like writing exercises—benefit most from personalized, human insight.

These early learning moments led us to an important question: If our staff don’t know how to appropriately use AI, how can we expect them to teach and model responsible use to students?

Why AI in Education Is Different

According to recent surveys, 84% of educators now use AI tools in the classroom—higher than most people would guess. What’s perhaps even more surprising is that only 32% report being hesitant to integrate AI without formal guidance, meaning 68% are moving forward without clear direction.

For BlueSky Principal Daniel Ondich, who has spent 18 years in online and blended education, the AI shift stands apart from other technology changes. “The biggest shift I’m seeing is that it’s making access to information much easier and quicker to find,” Ondich explains. “Skilled use of generative AI can closely mimic a user’s voice and perspective, making it very challenging to detect.”

The rapid evolution is another key difference. While previous educational technologies rolled out gradually, AI advances weekly, making it challenging for us to keep up.

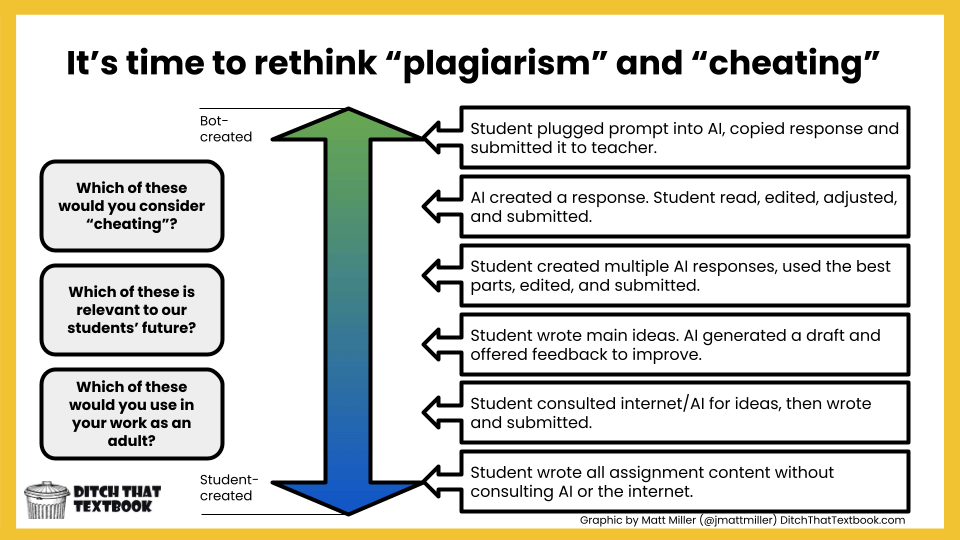

Rethinking “Cheating” in the AI Era

Before creating guidelines, we needed to confront an uncomfortable truth: the traditional concept of academic dishonesty doesn’t quite fit the AI age.

Consider this spectrum of AI use:

- A student plugs a prompt into AI, copies the response, and submits it

- AI creates a response, but the student reads, edits, and adjusts it before submitting

- A student creates multiple AI responses, uses the best parts, edits, and submits

- A student writes main ideas, AI generates a draft, and offers feedback to improve

- A student consults AI for ideas, then writes and submits their own work

- A student writes all assignment content without consulting AI or the internet

Which of these would you consider cheating? Which are relevant to students’ futures? Which would you use in your work as an adult?

These aren’t hypothetical questions—they’re the questions we face daily at BlueSky. AI detectors aren’t reliable enough to answer them, and accusations of cheating often miss the nuance of how AI can be used ethically as a learning tool.

BlueSky’s Solution: The “Bill of Rights” Approach

Rather than creating rigid policies that would become outdated within months, our leadership, tech committee, and curriculum committee collaborated on something more flexible: overarching guidelines modeled after the constitution’s ability to evolve over time.

The key insight? We focused on how we use AI, not specific use cases.

We developed five core principles that apply to both students and staff:

1. Human-Driven We use AI as a tool to support critical thinking, making our own ideas the priority. For our teachers, this means maintaining personal feedback. For our students, it means keeping learning and growth at the center.

2. Ethical Use Our students should inform teachers when they use AI and explain why. Our teachers demonstrate ethical use and disclose when AI is used in courses. The question Ondich wrestles with: “Should AI content that has been paraphrased be cited, while content created with an AI-generated outline, but no specific content generated, not be cited? Where do you draw the line?” That, like much of AI use, is case-based and left to the discretion of the educator.

3. Time & Place There’s an appropriate context for every tool. At BlueSky, our teachers guide when and how students use AI, just as they would any learning resource. Our curriculum development process considers whether technology and AI enhance student learning or help meet specific learning goals.

4. Safe Use For our students, this means learning to protect personally identifiable information (PII) while interacting with AI. For our staff, it means strict alignment with FERPA, COPPA, and Minnesota Student Data Privacy Act requirements.

5. Efficiency We believe AI should increase efficiency while maintaining human review for staff and keeping learning as the central purpose for students. As Ondich notes, “Depending on the purpose, it may just require a quick review to make sure what was created is accurate. For other instances, AI should only be used as a guide that supports idea generation and organization.”

Teaching Our Students to Use AI Responsibly

At BlueSky, we don’t just tell students about AI ethics—we’re teaching it explicitly. We’ve integrated AI literacy throughout our curriculum:

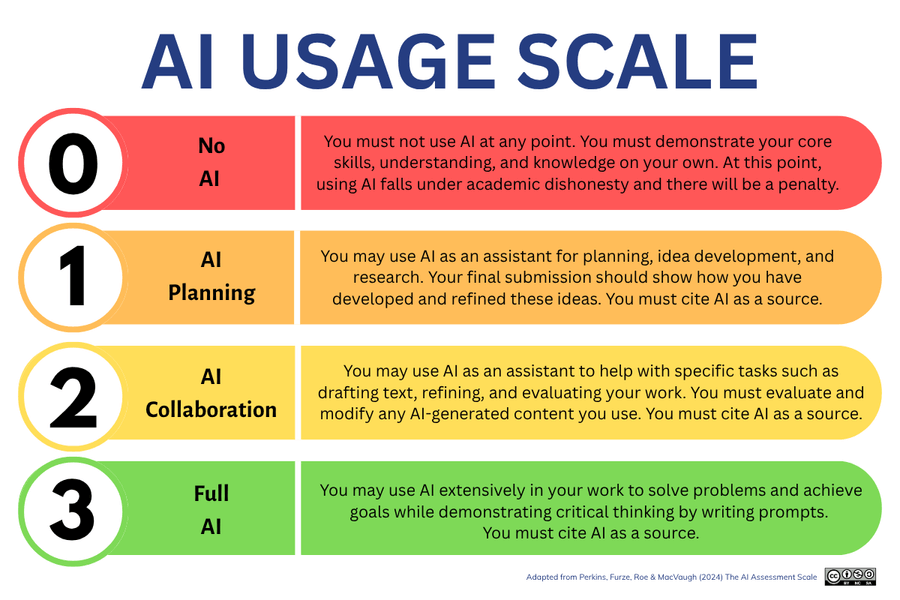

AI Usage Scale: Similar to other schools’ approaches, we use a 0-3 scale clearly posted at the top of each learning experience, showing students exactly what level of AI use is permitted

Direct Instruction: All our middle school students receive academic honesty training that covers AI

Practical Skills: Our courses cover MLA citation for AI, creating effective prompts, recognizing bias in AI, comparing different AI tools, and using AI to review and revise writing – skills that employers are looking for

Process Over Product: “For many learning experiences that have students using AI, what our teachers are looking at isn’t the answer, it’s how students get the answer,” Ondich explains. “What prompts are being used. How students are interacting with AI to get the best possible information.”

What Parents Think

Schools developing AI policies should know: parents are paying attention. According to a 2025 Learner.com survey, parents’ biggest concerns about AI in schools include over-reliance on technology (70.9%), misinformation or biased learning materials (67.1%), loss of personal touch in learning (61.6%), ethical implications (46.1%), and data privacy concerns (44.6%).

But here’s the flip side: 67% of parents think learning about AI and related technologies should be mandatory in high school curriculum.

The message is clear—parents want their children prepared for an AI-integrated world, but they want it done thoughtfully and safely.

The Underutilized Opportunity

When asked where AI is underutilized in education, Ondich doesn’t hesitate: data analysis.

“I’d love to explore integrating AI ethically within our systems to improve identification of struggling students so we can prevent students from getting behind and quickly intervene,” he says.

We’ve already conducted studies using AI to analyze student pacing—identifying the earliest point we can accurately tell when a student is falling behind, when a student likely won’t complete all learning experiences, and when a student is at risk of failing. This allows us to provide helpful, timely intervention.

Advice for Other Schools

Ondich’s recommendation for school leaders is straightforward: “Embrace AI by understanding how it’s currently being used in your school and take control by establishing use guidelines that support your school’s mission and vision.”

The guidelines don’t need to be perfect—AI is evolving too quickly for that. They need to be:

- Flexible enough to adapt as AI technology changes

- Clear enough to guide decisions when questions arise

- Aligned with your school’s core values and educational philosophy

- Applicable to all users—students, teachers, and staff

Looking Ahead

Will AI change standardized testing the way calculators eventually became accepted in math class? Ondich thinks so. “When calculators were first invented and became affordable for the average person, they weren’t allowed. Now they are embraced as part of most math courses. I expect we’ll see something similar happen with AI.”

At BlueSky, the response has been generally positive. Many of our staff members are excited to use AI to improve efficiency in their work and in the classroom. The biggest downside? An increase in students using AI inappropriately to complete assignments—precisely why explicit teaching about ethical AI use matters so much to us.

The reality is simple: kids are not going to wait for perfect AI policies before they start using these tools. Schools can either guide that learning or watch it happen without them. If you’re interested in learning more about BlueSky’s approach to AI and have any questions, feel free to reach out here.